Office of the Vice President for Research

480 nodes, 14,392 CPU cores, 72 TB RAM, 5.2 PB Storage, and 1,440 TeraFLOPS

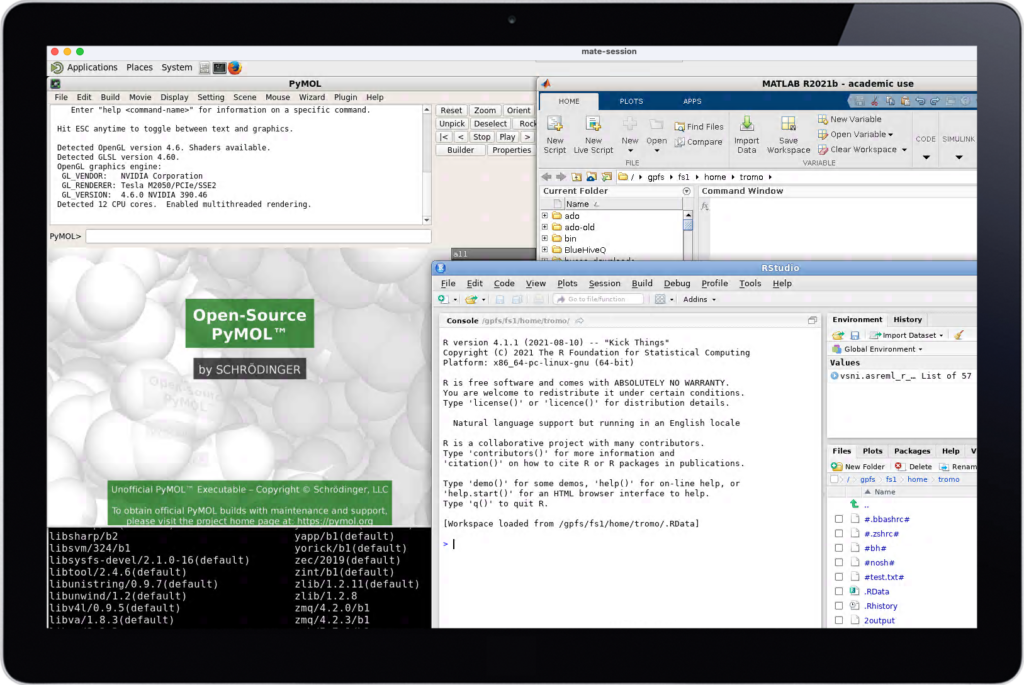

The BlueHive cluster is CIRC’s primary Linux cluster for demanding computations, which provides approximately 1,440 TFLOPS of computing capacity. This system consists of 480 nodes with a high-speed, low-latency, InfiniBand interconnect. The most recent addition to the BlueHive Cluster houses dual-processor systems with up to 96 cores per node and ranges in memory from 20 GB to 3 TB. A number of compute nodes have dedicated coprocessing cards, including NVIDIA’s K80 (Kepler), V100 (Volta), and A100 (Ampere) GPUs. In addition, some nodes of the cluster are dedicated to running “big data” analytics applications, such as Hadoop and Spark.

The entire BlueHive cluster has an InfiniBand-attached storage system providing approximately 5.2 PB of configurable raw disk within a GPFS file system Over 200 nodes with varying capacity have been integrated into the BlueHive cluster for faculty investigators who have purchased additional priority-base compute capacity for the environment. CIRC runs the SLURM resource scheduler and queuing system to optimize usage and to support multiple users of the BlueHive Linux cluster environment. Additionally, users have access to a browser-based technology to connect to BlueHive using a resumable web browser interface.

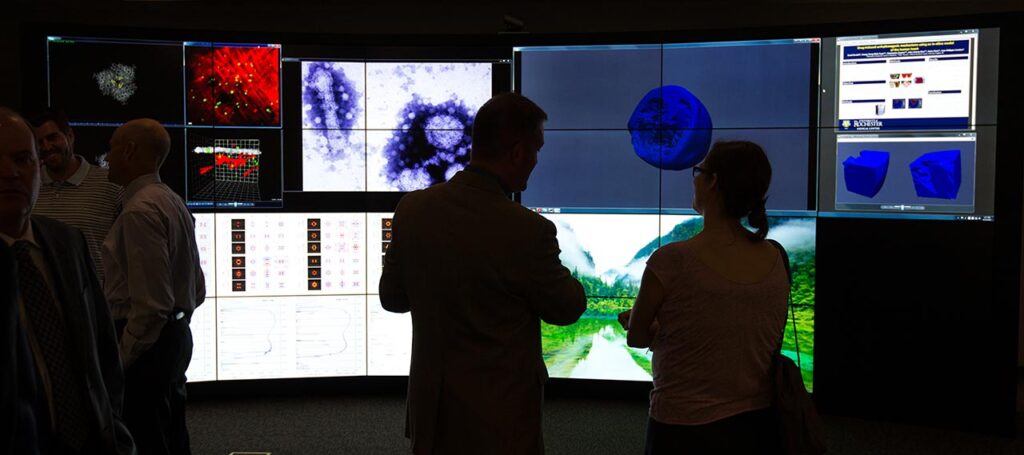

The Visualization-Innovation-Science-Technology-Application (VISTA) Collaboratory is a state of the art visualization lab capable of displaying massive datasets in real time. It facilitates the visualization and collaborative exploration of large and complex datasets. It is equipped with an interactive, tiled-display wall that renders massive data sets in real time, giving faculty researchers the ability to visualize and analyze complex data instantaneously and collaboratively with colleagues and students.

Office of the Vice President for Research

Center for Advanced Research Technologies

Clinical and Translational Science Institute

Del Monte Institute for Neuroscience

Goergen Institute for Data Science

Hajim School of Engineering and Applied Sciences

Health Sciences Center for Computational Innovation (HSCCI)

Information Technology

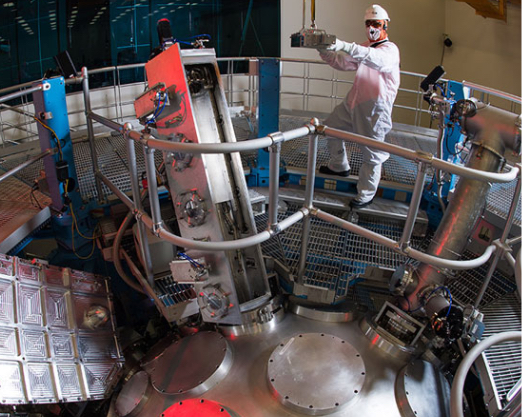

Laboratory for Laser Energetics

Miner Library

River Campus Libraries

Rochester Informatics

School of Arts and Sciences

School of Medicine and Dentistry

University of Rochester Research

UR Health Lab